- Attention 机制是用来做什么的 ?

- Self-attention 是怎么从 Attention 过度过来的 ?

- Attention 和 self-attention 的区别是什么 ?

- Self-attention 为什么能 work ?

- 怎么用 Pytorch 实现 self-attention ?

- Transformer 的作者对 self-attention 做了哪些 tricks ?

- 怎么用 Pytorch/Tensorflow2.0 实现在 Transfomer 中的 self-attention ?

- 完整的 Transformer Block 是什么样的?

- 怎么捕获序列中的顺序信息呢 ?

- 怎么用 Pytorch 实现一个完整的 Transformer 模型?

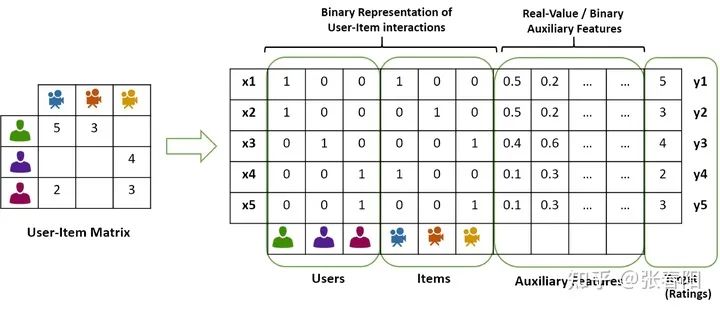

Attention 机制是用来做什么的 ?

Self-attention 是怎么从 Attention 过度过来的 ?

和 是一对输入和输出。对于下一个输出的向量 ,我们有一个全新的输入序列和一个不同的权重值。

Attention 和 self-attention 的区别是什么 ?

-

在神经网络中,通常来说你会有输入层(input),应用激活函数后的输出层(output),在RNN当中你会有状态(state)。如果attention (AT) 被应用在某一层的话,它更多的是被应用在输出或者是状态层上,而当我们使用self-attention(SA),这种注意力的机制更多的实在关注input上。 -

Attention (AT) 经常被应用在从编码器(encoder)转换到解码器(decoder)。比如说,解码器的神经元会接受一些AT从编码层生成的输入信息。在这种情况下,AT连接的是两个不同的组件(component),编码器和解码器。但是如果我们用SA,它就不是关注的两个组件,它只是在关注你应用的那一个组件。那这里他就不会去关注解码器了,就比如说在Bert中,使用的情况,我们就没有解码器。 -

SA可以在一个模型当中被多次的、独立的使用(比如说在Transformer中,使用了18次;在Bert当中使用12次)。但是,AT在一个模型当中经常只是被使用一次,并且起到连接两个组件的作用。 -

SA比较擅长在一个序列当中,寻找不同部分之间的关系。比如说,在词法分析的过程中,能够帮助去理解不同词之间的关系。AT却更擅长寻找两个序列之间的关系,比如说在翻译任务当中,原始的文本和翻译后的文本。这里也要注意,在翻译任务重,SA也很擅长,比如说Transformer。 -

AT可以连接两种不同的模态,比如说图片和文字。SA更多的是被应用在同一种模态上,但是如果一定要使用SA来做的话,也可以将不同的模态组合成一个序列,再使用SA。 -

对我来说,大部分情况,SA这种结构更加的general,在很多任务作为降维、特征表示、特征交叉等功能尝试着应用,很多时候效果都不错。

Self-attention 为什么能 work ?

-

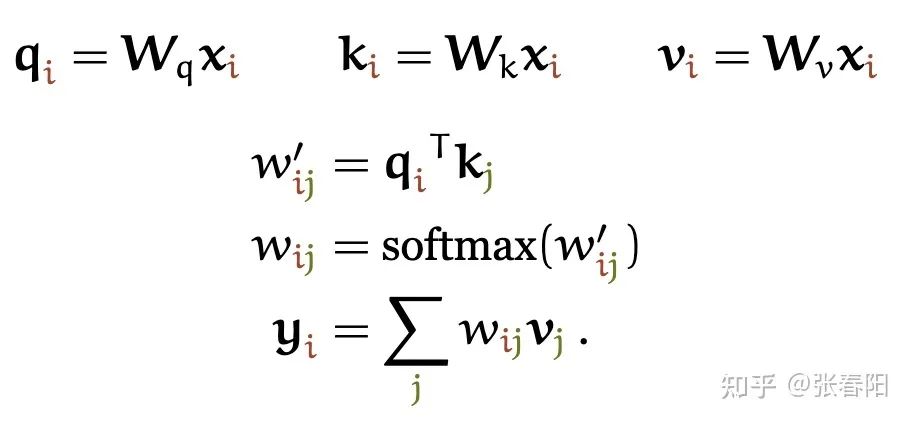

到目前为止,我们还没有用到需要学习的参数。基础的self-attention实际上完全取决于我们创建的输入序列,上游的embeding layer驱动着self-attention学习对于文本语义的向量表示。 -

Self-attention看到的序列只是一个集合(set),不是一个序列,它并没有顺序。如果我们重新排列集合,输出的序列也是一样的。后面我们要使用一些方法来缓和这种没有顺序所带来的信息的缺失。但是值得一提的是,self-attention本身是忽略序列的自然输入顺序的。

怎么用 Pytorch 实现self-attention ?

我不能实现的,也是我没有理解的。

-- 费曼

<span class="code-snippet_outer">import torch</span><span class="code-snippet_outer">import torch.nn.functional as F</span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"># assume we have some tensor x with size (b, t, k)</span><span class="code-snippet_outer">x = ...</span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer">raw_weights = torch.bmm(x, x.transpose(1, 2))</span><span class="code-snippet_outer"># - torch.bmm is a batched matrix multiplication. It </span><span class="code-snippet_outer"># applies matrix multiplication over batches of </span><span class="code-snippet_outer"># matrices.</span><span class="code-snippet_outer">weights = F.softmax(raw_weights, dim=2)</span><span class="code-snippet_outer">y = torch.bmm(weights, x)</span>Transformer 的作者对 Self-attention 做了哪些 tricks ?

这里分母为什么要使用 呢?我们想象一下,当我们有一个所有的值都为 的在 空间内的值。那它的欧式距离就为 。除以其实就是在除以向量平均的增长长度。

Narrow and wide self-attention

通常,我们有两种方式来实现multi-head的self-attention。默认的做法是我们会把embedding的向量 切割成块,比如说我们有一个256大小的embedding vector,并且我们使用8个attention head,那么我们会把这vector切割成8个维度大小为32的块。对于每一块,我们生成它的queries,keys和values,它们每一个的size都是32,那么也就意味着我们矩阵 ,, 的大小都是 。

那还有一种方法是,我们可以让矩阵,,的大小都是 ,并且把每一个attention head都应用到全部的256维大小的向量上。第一种方法的速度会更快,并且能够更节省内存,第二种方法能够得到更好的结果(同时也花费更多的时间和内存)。这两种方法分别叫做narrow and wide self-attention。

怎么用 Pytorch/Tensorflow2.0 实现在 Transfomer 中的self-attention ?

-

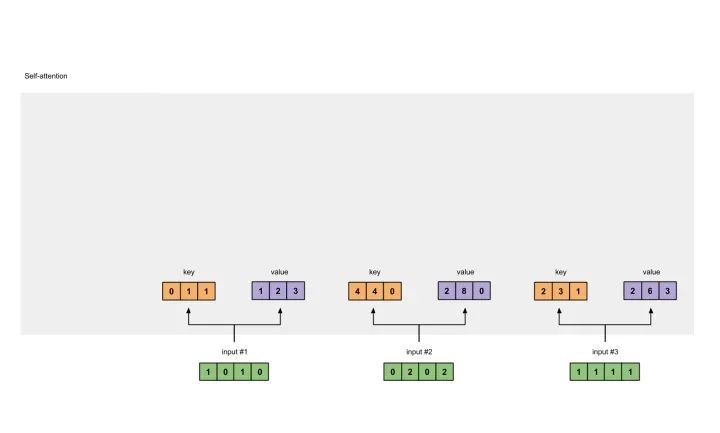

准备输入 -

初始化参数 -

获取key,query和value -

给input1计算attention score -

计算softmax -

给value乘上score -

给value加权求和获取output1 -

重复步骤4-7,获取output2,output3

<span class="code-snippet_outer">Input 1: [1, 0, 1, 0] </span><span class="code-snippet_outer">Input 2: [0, 2, 0, 2]</span><span class="code-snippet_outer">Input 3: [1, 1, 1, 1]</span>

后面我们会看到,value的维度,同样也是我们输出的维度。

<span class="code-snippet_outer">[[0, 0, 1],</span><span class="code-snippet_outer"> [1, 1, 0],</span><span class="code-snippet_outer"> [0, 1, 0],</span><span class="code-snippet_outer"> [1, 1, 0]]</span><span class="code-snippet_outer">[[1, 0, 1],</span><span class="code-snippet_outer"> [1, 0, 0],</span><span class="code-snippet_outer"> [0, 0, 1],</span><span class="code-snippet_outer"> [0, 1, 1]]</span><span class="code-snippet_outer">[[0, 2, 0],</span><span class="code-snippet_outer"> [0, 3, 0],</span><span class="code-snippet_outer"> [1, 0, 3],</span><span class="code-snippet_outer"> [1, 1, 0]]</span>

<span class="code-snippet_outer"> [0, 0, 1]</span><span class="code-snippet_outer">[1, 0, 1, 0] x [1, 1, 0] = [0, 1, 1]</span><span class="code-snippet_outer"> [0, 1, 0]</span><span class="code-snippet_outer"> [1, 1, 0]</span><span class="code-snippet_outer"> [0, 0, 1]</span><span class="code-snippet_outer">[0, 2, 0, 2] x [1, 1, 0] = [4, 4, 0]</span><span class="code-snippet_outer"> [0, 1, 0]</span><span class="code-snippet_outer"> [1, 1, 0]</span><span class="code-snippet_outer"> [0, 0, 1]</span><span class="code-snippet_outer">[1, 1, 1, 1] x [1, 1, 0] = [2, 3, 1]</span><span class="code-snippet_outer"> [0, 1, 0]</span><span class="code-snippet_outer"> [1, 1, 0]</span><span class="code-snippet_outer"> [0, 0, 1]</span><span class="code-snippet_outer">[1, 0, 1, 0] [1, 1, 0] [0, 1, 1]</span><span class="code-snippet_outer">[0, 2, 0, 2] x [0, 1, 0] = [4, 4, 0]</span><span class="code-snippet_outer">[1, 1, 1, 1] [1, 1, 0] [2, 3, 1]</span>

<span class="code-snippet_outer"> [0, 2, 0]</span><span class="code-snippet_outer">[1, 0, 1, 0] [0, 3, 0] [1, 2, 3] </span><span class="code-snippet_outer">[0, 2, 0, 2] x [1, 0, 3] = [2, 8, 0]</span><span class="code-snippet_outer">[1, 1, 1, 1] [1, 1, 0] [2, 6, 3]</span>

<span class="code-snippet_outer"> [1, 0, 1]</span><span class="code-snippet_outer">[1, 0, 1, 0] [1, 0, 0] [1, 0, 2]</span><span class="code-snippet_outer">[0, 2, 0, 2] x [0, 0, 1] = [2, 2, 2]</span><span class="code-snippet_outer">[1, 1, 1, 1] [0, 1, 1] [2, 1, 3]</span>在我们实际的应用中,有可能会在点乘后,加上一个bias的向量。

<span class="code-snippet_outer"> [0, 4, 2]</span><span class="code-snippet_outer">[1, 0, 2] x [1, 4, 3] = [2, 4, 4]</span><span class="code-snippet_outer"> [1, 0, 1]</span>

<span class="code-snippet_outer">softmax([2, 4, 4]) = [0.0, 0.5, 0.5]</span>

<span class="code-snippet_outer">1: 0.0 * [1, 2, 3] = [0.0, 0.0, 0.0]</span><span class="code-snippet_outer">2: 0.5 * [2, 8, 0] = [1.0, 4.0, 0.0]</span><span class="code-snippet_outer">3: 0.5 * [2, 6, 3] = [1.0, 3.0, 1.5]</span>

<span class="code-snippet_outer"> [0.0, 0.0, 0.0]</span><span class="code-snippet_outer">+ [1.0, 4.0, 0.0]</span><span class="code-snippet_outer">+ [1.0, 3.0, 1.5]</span><span class="code-snippet_outer">-----------------</span><span class="code-snippet_outer">= [2.0, 7.0, 1.5]</span>

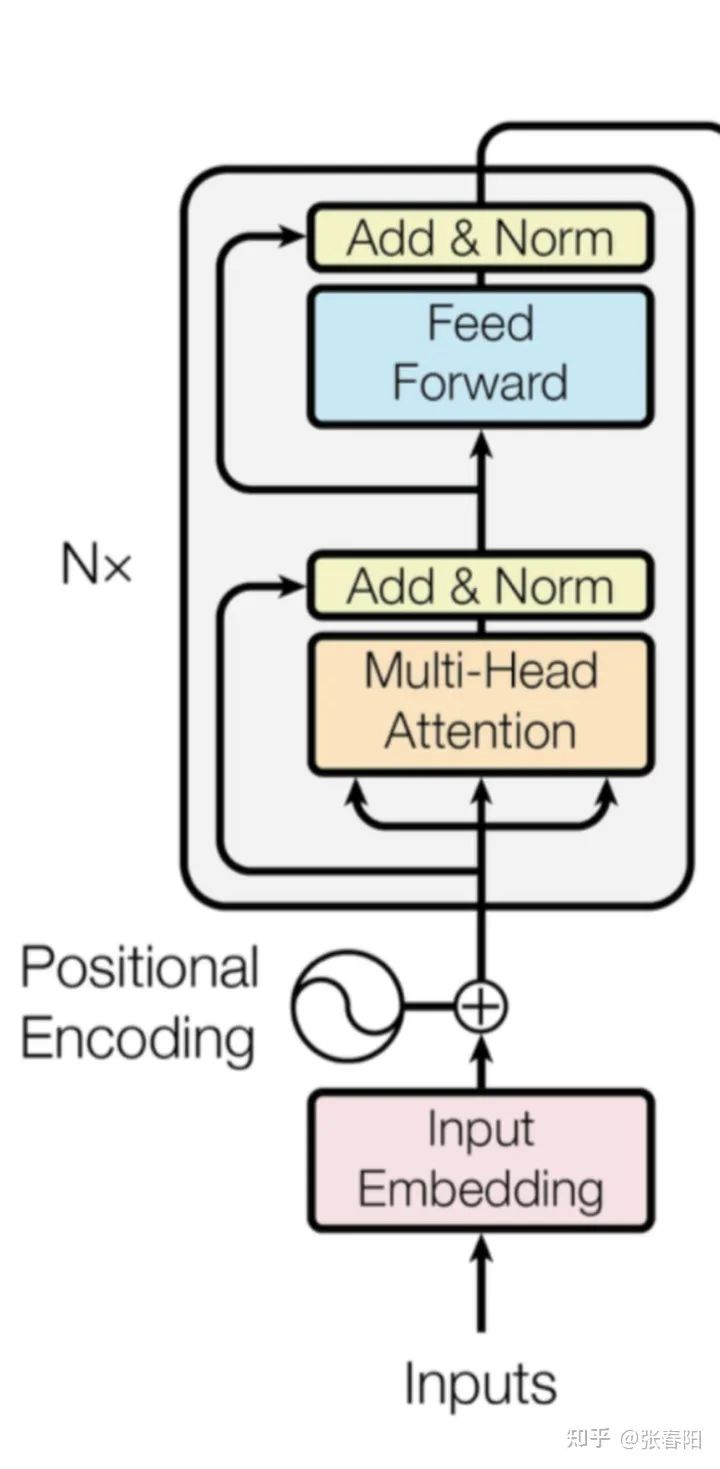

完整的 Transformer Block 是什么样的?

-

self-attention layer -

normalization layer -

feed forward layer -

another normalization layer

Normaliztion 和 residual connections 是我们经常使用的,帮助加快深度神经网络训练速度和准确率的 tricks。

<span class="code-snippet_outer"><span class="code-snippet__class"><span class="code-snippet__keyword">class</span> <span class="code-snippet__title">TransformerBlock</span>(<span class="code-snippet__title">nn</span>.<span class="code-snippet__title">Module</span>):</span></span><span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">__init__</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, k, heads)</span></span>:</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">super</span>().__init_<span class="code-snippet__number">_</span>()</span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.attention = SelfAttention(k, heads=heads)</span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.norm1 = nn.LayerNorm(k)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.norm2 = nn.LayerNorm(k)</span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.ff = nn.Sequential(</span><span class="code-snippet_outer"> nn.Linear(k, <span class="code-snippet__number">4</span> * k),</span><span class="code-snippet_outer"> nn.ReLU(),</span><span class="code-snippet_outer"> nn.Linear(<span class="code-snippet__number">4</span> * k, k))</span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">forward</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, x)</span></span>:</span><span class="code-snippet_outer"> attended = <span class="code-snippet__keyword">self</span>.attention(x)</span><span class="code-snippet_outer"> x = <span class="code-snippet__keyword">self</span>.norm1(attended + x)</span> <span class="code-snippet_outer"> fedforward = <span class="code-snippet__keyword">self</span>.ff(x)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">return</span> <span class="code-snippet__keyword">self</span>.norm2(fedforward + x)</span>怎么捕获序列中的顺序信息呢 ?

-

position embeddings

-

position encodings

<span class="code-snippet_outer"><span class="code-snippet__class"><span class="code-snippet__keyword">class</span> <span class="code-snippet__title">PositionalEncoder</span>(<span class="code-snippet__title">nn</span>.<span class="code-snippet__title">Module</span>):</span></span><span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">__init__</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, d_model, max_seq_len = <span class="code-snippet__number">80</span>)</span></span>:</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">super</span>().__init_<span class="code-snippet__number">_</span>()</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.d_model = d_model</span> <span class="code-snippet_outer"> <span class="code-snippet__comment"># 根据pos和i创建一个常量pe矩阵</span></span><span class="code-snippet_outer"> pe = torch.zeros(max_seq_len, d_model)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">for</span> pos <span class="code-snippet__keyword">in</span> range(max_seq_len):</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">for</span> i <span class="code-snippet__keyword">in</span> range(<span class="code-snippet__number">0</span>, d_model, <span class="code-snippet__number">2</span>):</span><span class="code-snippet_outer"> pe[pos, i] = </span><span class="code-snippet_outer"> math.sin(pos / (<span class="code-snippet__number">10000</span> ** ((<span class="code-snippet__number">2</span> * i)/d_model)))</span><span class="code-snippet_outer"> pe[pos, i + <span class="code-snippet__number">1</span>] = </span><span class="code-snippet_outer"> math.cos(pos / (<span class="code-snippet__number">10000</span> ** ((<span class="code-snippet__number">2</span> * (i + <span class="code-snippet__number">1</span>))/d_model)))</span> <span class="code-snippet_outer"> pe = pe.unsqueeze(<span class="code-snippet__number">0</span>)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.register_buffer(<span class="code-snippet__string">'pe'</span>, pe)</span> <span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">forward</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, x)</span></span>:</span><span class="code-snippet_outer"> <span class="code-snippet__comment"># 让 embeddings vector 相对大一些</span></span><span class="code-snippet_outer"> x = x * math.sqrt(<span class="code-snippet__keyword">self</span>.d_model)</span><span class="code-snippet_outer"> <span class="code-snippet__comment"># 增加位置常量到 embedding 中</span></span><span class="code-snippet_outer"> seq_len = x.size(<span class="code-snippet__number">1</span>)</span><span class="code-snippet_outer"> x = x + Variable(<span class="code-snippet__keyword">self</span>.pe[<span class="code-snippet__symbol">:</span>,<span class="code-snippet__symbol">:seq_len</span>], </span><span class="code-snippet_outer"> requires_grad=False).cuda()</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">return</span> x</span>怎么用 Pytorch 实现一个完整的 Transformer 模型?

-

Tokenize -

Input Embedding -

Positional Encoder -

Transformer Block -

Encoder -

Decoder -

Transformer

<span class="code-snippet_outer"><span class="code-snippet__class"><span class="code-snippet__keyword">class</span> <span class="code-snippet__title">Tokenize</span><span class="code-snippet__params">(object)</span>:</span></span> <span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">__init__</span><span class="code-snippet__params">(self, lang)</span>:</span></span><span class="code-snippet_outer"> self.nlp = importlib.import_module(lang).load()</span> <span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">tokenizer</span><span class="code-snippet__params">(self, sentence)</span>:</span></span><span class="code-snippet_outer"> sentence = re.sub(</span><span class="code-snippet_outer"> <span class="code-snippet__string">r"[*"“”n\…+-/=()‘•:[]|’!;]"</span>, <span class="code-snippet__string">" "</span>, str(sentence))</span><span class="code-snippet_outer"> sentence = re.sub(<span class="code-snippet__string">r"[ ]+"</span>, <span class="code-snippet__string">" "</span>, sentence)</span><span class="code-snippet_outer"> sentence = re.sub(<span class="code-snippet__string">r"!+"</span>, <span class="code-snippet__string">"!"</span>, sentence)</span><span class="code-snippet_outer"> sentence = re.sub(<span class="code-snippet__string">r",+"</span>, <span class="code-snippet__string">","</span>, sentence)</span><span class="code-snippet_outer"> sentence = re.sub(<span class="code-snippet__string">r"?+"</span>, <span class="code-snippet__string">"?"</span>, sentence)</span><span class="code-snippet_outer"> sentence = sentence.lower()</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">return</span> [tok.text <span class="code-snippet__keyword">for</span> tok <span class="code-snippet__keyword">in</span> self.nlp.tokenizer(sentence) <span class="code-snippet__keyword">if</span> tok.text != <span class="code-snippet__string">" "</span>]</span>

<span class="code-snippet_outer"><span class="code-snippet__class"><span class="code-snippet__keyword">class</span> <span class="code-snippet__title">Embedding</span>(<span class="code-snippet__title">nn</span>.<span class="code-snippet__title">Module</span>):</span></span> <span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">__init__</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, vocab_size, d_model)</span></span>:</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">super</span>().__init_<span class="code-snippet__number">_</span>()</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.d_model = d_model</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.embed = nn.Embedding(vocab_size, d_model)</span> <span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">forward</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, x)</span></span>:</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">return</span> <span class="code-snippet__keyword">self</span>.embed(x)</span>

<span class="code-snippet_outer"><span class="code-snippet__class"><span class="code-snippet__keyword">class</span> <span class="code-snippet__title">PositionalEncoder</span>(<span class="code-snippet__title">nn</span>.<span class="code-snippet__title">Module</span>):</span></span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">__init__</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, d_model, max_seq_len = <span class="code-snippet__number">80</span>)</span></span>:</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">super</span>().__init_<span class="code-snippet__number">_</span>()</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.d_model = d_model</span> <span class="code-snippet_outer"> <span class="code-snippet__comment"># 根据pos和i创建一个常量pe矩阵</span></span><span class="code-snippet_outer"> pe = torch.zeros(max_seq_len, d_model)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">for</span> pos <span class="code-snippet__keyword">in</span> range(max_seq_len):</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">for</span> i <span class="code-snippet__keyword">in</span> range(<span class="code-snippet__number">0</span>, d_model, <span class="code-snippet__number">2</span>):</span><span class="code-snippet_outer"> pe[pos, i] = </span><span class="code-snippet_outer"> math.sin(pos / (<span class="code-snippet__number">10000</span> ** ((<span class="code-snippet__number">2</span> * i)/d_model)))</span><span class="code-snippet_outer"> pe[pos, i + <span class="code-snippet__number">1</span>] = </span><span class="code-snippet_outer"> math.cos(pos / (<span class="code-snippet__number">10000</span> ** ((<span class="code-snippet__number">2</span> * (i + <span class="code-snippet__number">1</span>))/d_model)))</span> <span class="code-snippet_outer"> pe = pe.unsqueeze(<span class="code-snippet__number">0</span>)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.register_buffer(<span class="code-snippet__string">'pe'</span>, pe)</span> <span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">forward</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, x)</span></span>:</span><span class="code-snippet_outer"> <span class="code-snippet__comment"># 让 embeddings vector 相对大一些</span></span><span class="code-snippet_outer"> x = x * math.sqrt(<span class="code-snippet__keyword">self</span>.d_model)</span><span class="code-snippet_outer"> <span class="code-snippet__comment"># 增加位置常量到 embedding 中</span></span><span class="code-snippet_outer"> seq_len = x.size(<span class="code-snippet__number">1</span>)</span><span class="code-snippet_outer"> x = x + Variable(<span class="code-snippet__keyword">self</span>.pe[<span class="code-snippet__symbol">:</span>,<span class="code-snippet__symbol">:seq_len</span>], </span><span class="code-snippet_outer"> requires_grad=False).cuda()</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">return</span> x</span>-

self-attention layer -

normalization layer -

feed forward layer -

another normalization layer

<span class="code-snippet_outer"><span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">attention</span><span class="code-snippet__params">(q, k, v, d_k, mask=None, dropout=None)</span>:</span></span> <span class="code-snippet_outer"> scores = torch.matmul(q, k.transpose(<span class="code-snippet__number">-2</span>, <span class="code-snippet__number">-1</span>)) / math.sqrt(d_k)</span> <span class="code-snippet_outer"> <span class="code-snippet__comment"># mask掉那些为了padding长度增加的token,让其通过softmax计算后为0</span></span><span class="code-snippet_outer"> <span class="code-snippet__keyword">if</span> mask <span class="code-snippet__keyword">is</span> <span class="code-snippet__keyword">not</span> <span class="code-snippet__keyword">None</span>:</span><span class="code-snippet_outer"> mask = mask.unsqueeze(<span class="code-snippet__number">1</span>)</span><span class="code-snippet_outer"> scores = scores.masked_fill(mask == <span class="code-snippet__number">0</span>, <span class="code-snippet__number">-1e9</span>)</span> <span class="code-snippet_outer"> scores = F.softmax(scores, dim=<span class="code-snippet__number">-1</span>)</span> <span class="code-snippet_outer"> <span class="code-snippet__keyword">if</span> dropout <span class="code-snippet__keyword">is</span> <span class="code-snippet__keyword">not</span> <span class="code-snippet__keyword">None</span>:</span><span class="code-snippet_outer"> scores = dropout(scores)</span> <span class="code-snippet_outer"> output = torch.matmul(scores, v)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">return</span> output</span>这个 attention 的代码中,使用 mask 的机制,这里主要的意思是因为在去给文本做 batch化的过程中,需要序列都是等长的,不足的部分需要 padding。但是这些 padding 的部分,我们并不想在计算的过程中起作用,所以使用 mask 机制,将这些值设置成一个非常大的负值,这样才能让 softmax 后的结果为0。关于 mask 机制,在 Transformer 中有 attention、encoder 和 decoder 中,有不同的应用,我会在后面的文章中进行解释。

<span class="code-snippet_outer"><span class="code-snippet__keyword">class</span> MultiHeadAttention(nn.Module):</span> <span class="code-snippet_outer"> def __init__(<span class="code-snippet__keyword">self</span>, heads, d_model, dropout = <span class="code-snippet__number">0.1</span>):</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">super</span>().__init__()</span> <span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.d_model = d_model</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.d_k = d_model <span class="code-snippet__comment">// heads</span></span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.h = heads</span> <span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.q_linear = nn.Linear(d_model, d_model)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.v_linear = nn.Linear(d_model, d_model)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.k_linear = nn.Linear(d_model, d_model)</span> <span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.dropout = nn.Dropout(dropout)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.out = nn.Linear(d_model, d_model)</span> <span class="code-snippet_outer"> def forward(<span class="code-snippet__keyword">self</span>, q, k, v, mask=None):</span> <span class="code-snippet_outer"> bs = q.size(<span class="code-snippet__number">0</span>)</span> <span class="code-snippet_outer"> <span class="code-snippet__meta"># perform linear operation and split into N heads</span></span><span class="code-snippet_outer"> k = <span class="code-snippet__keyword">self</span>.k_linear(k).view(bs, <span class="code-snippet__number">-1</span>, <span class="code-snippet__keyword">self</span>.h, <span class="code-snippet__keyword">self</span>.d_k)</span><span class="code-snippet_outer"> q = <span class="code-snippet__keyword">self</span>.q_linear(q).view(bs, <span class="code-snippet__number">-1</span>, <span class="code-snippet__keyword">self</span>.h, <span class="code-snippet__keyword">self</span>.d_k)</span><span class="code-snippet_outer"> v = <span class="code-snippet__keyword">self</span>.v_linear(v).view(bs, <span class="code-snippet__number">-1</span>, <span class="code-snippet__keyword">self</span>.h, <span class="code-snippet__keyword">self</span>.d_k)</span> <span class="code-snippet_outer"> <span class="code-snippet__meta"># transpose to get dimensions bs * N * sl * d_model</span></span><span class="code-snippet_outer"> k = k.transpose(<span class="code-snippet__number">1</span>,<span class="code-snippet__number">2</span>)</span><span class="code-snippet_outer"> q = q.transpose(<span class="code-snippet__number">1</span>,<span class="code-snippet__number">2</span>)</span><span class="code-snippet_outer"> v = v.transpose(<span class="code-snippet__number">1</span>,<span class="code-snippet__number">2</span>)</span> <span class="code-snippet_outer"> <span class="code-snippet__meta"># calculate attention using function we will define next</span></span><span class="code-snippet_outer"> scores = attention(q, k, v, <span class="code-snippet__keyword">self</span>.d_k, mask, <span class="code-snippet__keyword">self</span>.dropout)</span><span class="code-snippet_outer"> <span class="code-snippet__meta"># concatenate heads and put through final linear layer</span></span><span class="code-snippet_outer"> concat = scores.transpose(<span class="code-snippet__number">1</span>,<span class="code-snippet__number">2</span>).contiguous()</span><span class="code-snippet_outer"> .view(bs, <span class="code-snippet__number">-1</span>, <span class="code-snippet__keyword">self</span>.d_model)</span><span class="code-snippet_outer"> output = <span class="code-snippet__keyword">self</span>.out(concat)</span> <span class="code-snippet_outer"> <span class="code-snippet__keyword">return</span> output</span><span class="code-snippet_outer"><span class="code-snippet__class"><span class="code-snippet__keyword">class</span> <span class="code-snippet__title">NormLayer</span>(<span class="code-snippet__title">nn</span>.<span class="code-snippet__title">Module</span>):</span></span> <span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">__init__</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, d_model, eps = <span class="code-snippet__number">1</span>e-<span class="code-snippet__number">6</span>)</span></span>:</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">super</span>().__init_<span class="code-snippet__number">_</span>()</span> <span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.size = d_model</span> <span class="code-snippet_outer"> <span class="code-snippet__comment"># 使用两个可以学习的参数来进行 normalisation</span></span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.alpha = nn.Parameter(torch.ones(<span class="code-snippet__keyword">self</span>.size))</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.bias = nn.Parameter(torch.zeros(<span class="code-snippet__keyword">self</span>.size))</span> <span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.eps = eps</span> <span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">forward</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, x)</span></span>:</span><span class="code-snippet_outer"> norm = <span class="code-snippet__keyword">self</span>.alpha * (x - x.mean(dim=-<span class="code-snippet__number">1</span>, keepdim=True)) </span><span class="code-snippet_outer"> / (x.std(dim=-<span class="code-snippet__number">1</span>, keepdim=True) + <span class="code-snippet__keyword">self</span>.eps) + <span class="code-snippet__keyword">self</span>.bias</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">return</span> norm</span><span class="code-snippet_outer"><span class="code-snippet__class"><span class="code-snippet__keyword">class</span> <span class="code-snippet__title">FeedForward</span>(<span class="code-snippet__title">nn</span>.<span class="code-snippet__title">Module</span>):</span></span> <span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">__init__</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, d_model, d_ff=<span class="code-snippet__number">2048</span>, dropout = <span class="code-snippet__number">0</span>.<span class="code-snippet__number">1</span>)</span></span>:</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">super</span>().__init_<span class="code-snippet__number">_</span>() </span> <span class="code-snippet_outer"> <span class="code-snippet__comment"># We set d_ff as a default to 2048</span></span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.linear_1 = nn.Linear(d_model, d_ff)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.dropout = nn.Dropout(dropout)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.linear_2 = nn.Linear(d_ff, d_model)</span> <span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">forward</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, x)</span></span>:</span><span class="code-snippet_outer"> x = <span class="code-snippet__keyword">self</span>.dropout(F.relu(<span class="code-snippet__keyword">self</span>.linear_1(x)))</span><span class="code-snippet_outer"> x = <span class="code-snippet__keyword">self</span>.linear_2(x)</span>

<span class="code-snippet_outer"><span class="code-snippet__class"><span class="code-snippet__keyword">class</span> <span class="code-snippet__title">EncoderLayer</span>(<span class="code-snippet__title">nn</span>.<span class="code-snippet__title">Module</span>):</span></span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">__init__</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, d_model, heads, dropout=<span class="code-snippet__number">0</span>.<span class="code-snippet__number">1</span>)</span></span>:</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">super</span>().__init_<span class="code-snippet__number">_</span>()</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.norm_1 = Norm(d_model)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.norm_2 = Norm(d_model)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.attn = MultiHeadAttention(heads, d_model, dropout=dropout)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.ff = FeedForward(d_model, dropout=dropout)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.dropout_1 = nn.Dropout(dropout)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.dropout_2 = nn.Dropout(dropout)</span> <span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">forward</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, x, mask)</span></span>:</span><span class="code-snippet_outer"> x2 = <span class="code-snippet__keyword">self</span>.norm_1(x)</span><span class="code-snippet_outer"> x = x + <span class="code-snippet__keyword">self</span>.dropout_1(<span class="code-snippet__keyword">self</span>.attn(x2,x2,x2,mask))</span><span class="code-snippet_outer"> x2 = <span class="code-snippet__keyword">self</span>.norm_2(x)</span><span class="code-snippet_outer"> x = x + <span class="code-snippet__keyword">self</span>.dropout_2(<span class="code-snippet__keyword">self</span>.ff(x2))</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">return</span> x</span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"><span class="code-snippet__class"><span class="code-snippet__keyword">class</span> <span class="code-snippet__title">Encoder</span>(<span class="code-snippet__title">nn</span>.<span class="code-snippet__title">Module</span>):</span></span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">__init__</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, vocab_size, d_model, N, heads, dropout)</span></span>:</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">super</span>().__init_<span class="code-snippet__number">_</span>()</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.N = N</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.embed = Embedder(vocab_size, d_model)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.pe = PositionalEncoder(d_model, dropout=dropout)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.layers = get_clones(EncoderLayer(d_model, heads, dropout), N)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.norm = Norm(d_model)</span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">forward</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, src, mask)</span></span>:</span><span class="code-snippet_outer"> x = <span class="code-snippet__keyword">self</span>.embed(src)</span><span class="code-snippet_outer"> x = <span class="code-snippet__keyword">self</span>.pe(x)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">for</span> i <span class="code-snippet__keyword">in</span> range(<span class="code-snippet__keyword">self</span>.N):</span><span class="code-snippet_outer"> x = <span class="code-snippet__keyword">self</span>.layers[i](x, mask)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">return</span> <span class="code-snippet__keyword">self</span>.norm(x)</span>

<span class="code-snippet_outer"><span class="code-snippet__class"><span class="code-snippet__keyword">class</span> <span class="code-snippet__title">DecoderLayer</span>(<span class="code-snippet__title">nn</span>.<span class="code-snippet__title">Module</span>):</span></span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">__init__</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, d_model, heads, dropout=<span class="code-snippet__number">0</span>.<span class="code-snippet__number">1</span>)</span></span>:</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">super</span>().__init_<span class="code-snippet__number">_</span>()</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.norm_1 = Norm(d_model)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.norm_2 = Norm(d_model)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.norm_3 = Norm(d_model)</span> <span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.dropout_1 = nn.Dropout(dropout)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.dropout_2 = nn.Dropout(dropout)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.dropout_3 = nn.Dropout(dropout)</span> <span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.attn_1 = MultiHeadAttention(heads, d_model, dropout=dropout)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.attn_2 = MultiHeadAttention(heads, d_model, dropout=dropout)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.ff = FeedForward(d_model, dropout=dropout)</span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">forward</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, x, e_outputs, src_mask, trg_mask)</span></span>:</span><span class="code-snippet_outer"> x2 = <span class="code-snippet__keyword">self</span>.norm_1(x)</span><span class="code-snippet_outer"> x = x + <span class="code-snippet__keyword">self</span>.dropout_1(<span class="code-snippet__keyword">self</span>.attn_1(x2, x2, x2, trg_mask))</span><span class="code-snippet_outer"> x2 = <span class="code-snippet__keyword">self</span>.norm_2(x)</span><span class="code-snippet_outer"> x = x + <span class="code-snippet__keyword">self</span>.dropout_2(<span class="code-snippet__keyword">self</span>.attn_2(x2, e_outputs, e_outputs, </span><span class="code-snippet_outer"> src_mask))</span><span class="code-snippet_outer"> x2 = <span class="code-snippet__keyword">self</span>.norm_3(x)</span><span class="code-snippet_outer"> x = x + <span class="code-snippet__keyword">self</span>.dropout_3(<span class="code-snippet__keyword">self</span>.ff(x2))</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">return</span> x</span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"><span class="code-snippet__class"><span class="code-snippet__keyword">class</span> <span class="code-snippet__title">Decoder</span>(<span class="code-snippet__title">nn</span>.<span class="code-snippet__title">Module</span>):</span></span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">__init__</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, vocab_size, d_model, N, heads, dropout)</span></span>:</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">super</span>().__init_<span class="code-snippet__number">_</span>()</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.N = N</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.embed = Embedder(vocab_size, d_model)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.pe = PositionalEncoder(d_model, dropout=dropout)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.layers = get_clones(DecoderLayer(d_model, heads, dropout), N)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.norm = Norm(d_model)</span><span class="code-snippet_outer"><br />

</span><span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">forward</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, trg, e_outputs, src_mask, trg_mask)</span></span>:</span><span class="code-snippet_outer"> x = <span class="code-snippet__keyword">self</span>.embed(trg)</span><span class="code-snippet_outer"> x = <span class="code-snippet__keyword">self</span>.pe(x)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">for</span> i <span class="code-snippet__keyword">in</span> range(<span class="code-snippet__keyword">self</span>.N):</span><span class="code-snippet_outer"> x = <span class="code-snippet__keyword">self</span>.layers[i](x, e_outputs, src_mask, trg_mask)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">return</span> <span class="code-snippet__keyword">self</span>.norm(x)</span>

<span class="code-snippet_outer"><span class="code-snippet__class"><span class="code-snippet__keyword">class</span> <span class="code-snippet__title">Transformer</span>(<span class="code-snippet__title">nn</span>.<span class="code-snippet__title">Module</span>):</span></span> <span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">__init__</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, src_vocab, trg_vocab, d_model, N, heads, dropout)</span></span>:</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">super</span>().__init_<span class="code-snippet__number">_</span>()</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.encoder = Encoder(src_vocab, d_model, N, heads, dropout)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.decoder = Decoder(trg_vocab, d_model, N, heads, dropout)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">self</span>.out = nn.Linear(d_model, trg_vocab)</span> <span class="code-snippet_outer"> <span class="code-snippet__function"><span class="code-snippet__keyword">def</span> <span class="code-snippet__title">forward</span><span class="code-snippet__params">(<span class="code-snippet__keyword">self</span>, src, trg, src_mask, trg_mask)</span></span>:</span><span class="code-snippet_outer"> e_outputs = <span class="code-snippet__keyword">self</span>.encoder(src, src_mask)</span><span class="code-snippet_outer"> d_output = <span class="code-snippet__keyword">self</span>.decoder(trg, e_outputs, src_mask, trg_mask)</span><span class="code-snippet_outer"> output = <span class="code-snippet__keyword">self</span>.out(d_output)</span><span class="code-snippet_outer"> <span class="code-snippet__keyword">return</span> output</span>本文为原创文章,版权归知行编程网所有,欢迎分享本文,转载请保留出处!

内容反馈